Just wanted to do a quick video to cover the process of deploying ADF artifacts from Development environment to higher environments like QA, etc. these are the basic steps to get it done (please watch the video for detailed instructions) https://youtu.be/7fsIwWheyDk Create a new project in Devops if there is no project set up alreadyMake … Continue reading How to Deploy Azure Data Factory (ADF) from Dev to QA using Devops

Category: Azure Data Lake

Incrementally Load New Files in Azure Data Factory by Looking Up Latest Modified Date in Destination Folder

This is a common business scenario, but it turns out that you have to do quite a bit of work in Azure Data factory to make it work. So the goal is to take a look at the destination folder, find the file with the latest modified date, and then use that date as the … Continue reading Incrementally Load New Files in Azure Data Factory by Looking Up Latest Modified Date in Destination Folder

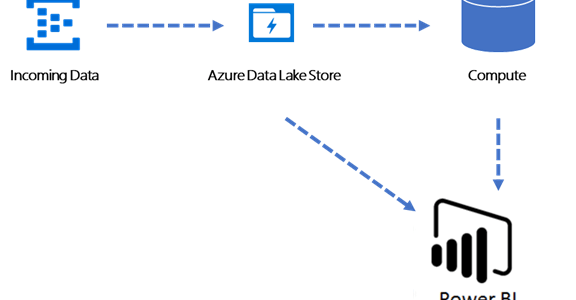

How to make Azure Databricks work with Azure Data Lake Storage Gen2 and Power BI

This post is a beginning to a series of articles about building analytical capabilities in Azure using data lake, Databricks and Power BI. On the surface, those technologies seem like they were specifically designed to complement each other as they provide a set of foundational capabilities necessary to develop scalable and cost-effective business intelligence … Continue reading How to make Azure Databricks work with Azure Data Lake Storage Gen2 and Power BI

Optimizing Azure Analysis Services Partitions for Azure Data Warehouse External Tables

Recently, I had a chance to work with Azure Analysis Services (AS) sourcing data from Azure Data Warehouse (DW) external tables. Optimizing the processing of the Azure Analysis Services partitions to use with the Azure DW external tables is a bit different from working with the regular (physical) data tables, and I will discuss the … Continue reading Optimizing Azure Analysis Services Partitions for Azure Data Warehouse External Tables

Giving access to Azure Active Directory Application to Enable Polybase

I have run into this a few times now and every time it took me a while to figure out what's going on, so I figured if I wrote about this on my blog, maybe I would not forget about it next time. Microsoft has a great article here that details how to setup Azure … Continue reading Giving access to Azure Active Directory Application to Enable Polybase

Power Shell script to load data in to Data Lake Store

In an earlier post, we talked about self-service process to hydrate the Data Lake Store. We also mentioned the need to use Power Shell to load data files larger than 2Gb. Here is the Power Shell script: Provide your credentials to login to Azure: $MyAzureName = "<YourAzureUsername>"; $MyAzurePassword = ConvertTo-SecureString '<YourAzurePassword>' -AsPlainText -Force; $AzureRMCredential = New-Object System.Management.Automation.PSCredential($MyAzureName, … Continue reading Power Shell script to load data in to Data Lake Store

Power BI and Azure Data Lake

Do you have to be a developer in order to implement a solution that ties together Power BI and Azure Data Lake? I argue that you don't. However, there are a several things that you need to be familiar with before you get going. Therefore, I decided to cover several of them in this article. … Continue reading Power BI and Azure Data Lake